Good AI data practices for SMEs and start-ups

Posted: Tue 31st Mar 2026

Artificial intelligence (AI) depends heavily on data quality and management.

Without the right data practices, even the most advanced AI models can fail to deliver accurate or useful results.

Getting AI data right is essential if you're to build a system that performs well, adapts to new challenges and provides reliable insights.

In this session, John Hauxwell outlines 10 best practices to follow to help you manage AI data effectively and make sure your AI projects succeed.

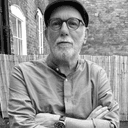

About the speaker

John is a seasoned practice lead, fractional CxO and board adviser with over 30 years of experience.

As a data governance, digital transformation, solutions and strategic architecture lead, he specialises in creating programs and frameworks that help businesses innovate, transform, create and unlock valuable business insights!

Watch more expert webinars

Access a growing collection of expert-led webinars covering marketing, sales, finance, growth and more – ready whenever you are.

Transcript

Lightly edited for clarity.

Ryan: Good afternoon, everyone, and welcome to today's Lunch and Learn. My name is Ryan, and I will be your host today.

For those of you attending Lunch and Learn for the first time, Enterprise Nation is a vibrant community platform for start-ups and small businesses.

Today, I'm really pleased to introduce John Hauxwell, founder and CDO of AIdentity. In this session, John outlines 10 best practices to help you manage AI data effectively and make sure that your AI projects succeed.

As always, if you've got questions, post them in the chat and we'll do our best to answer them at the end. The session is recorded as well, and the follow-up email with the recording and any further resources will go out later today, so keep an eye out for that.

So, over to you, John.

John Hauxwell: Hello, everybody. Thank you very much for coming along today. It's great to see so many of you here.

I'm John Hauxwell. I'm the CDO of AIdentity. We are a data advisory practice that supports SMEs and small businesses to help them grow their data awareness, data understanding and data use, which is a particularly relevant topic at the moment given the expansion of AI.

Without further ado, let me just pull together a view of my screen so you can see it, and we'll crack on.

Right, so we're talking today about data practices for SMEs, start-ups and scale-ups.

The important thing to understand is that it doesn't matter whether you're a one-man band, five people, 50 people or 500 people. Your data practices should fundamentally have the same starting point.

They may evolve and become more advanced, but the fundamentals of your data processing, management and control should be the same, no matter how big your company is.

So if we think about those fundamentals in terms of process, we have to think about things like understanding your data.

What do I want to do with my data? What is the strategy for it? Is it to help me promote sales? Does it help me provide company information? Is it to support AI and applications in my business?

Where does my data come from? What are the sources? How reliable is it? How true is it?

How am I connecting to my data? APIs, tokens, how is it controlled? What levels of security are there?

What do the data systems look like? Are they cloud-based? What are we using? How are we ingesting our data? How are we storing it? What are we using it for?

Are we using data lakes, warehouses or lakehouses? Are we using big LLMs, small LLMs, smaller data, big data, SQL, noSQL?

These are all technical questions that you need to ask yourselves to understand your data landscape.

What process do we have around governance, privacy, ethics and security? There's a little box there called GOPERS.

What does my governance engine look like? What are the processes around privacy, ethics and security? What do my datasets look like when that's applied? How am I using them? What am I doing with them?

And then, if you look over on the left-hand side, you'll see things like analytics and containers. Are we using virtualisation? Are we using cloud? What are we doing with it?

These are all things you need to understand and be aware of within your business so you can put together a well-constructed data-processing and data-management approach with your internal and external customers.

That way, you have a congruent view of your data across the company, so sales doesn't start arguing with marketing, and marketing doesn't argue with the CEO, and technical doesn't get caught in the middle while everyone asks why the figures don't match.

These are all things you should understand and eliminate from your business because they're very costly and very time-consuming.

If you look at the bottom diagram, you can see a basic flowchart of the grey boxes running across the top.

High-level assessment, detailed assessment, your as-is state, where you want to be, what your KPIs are, and what your basic GOPERS programme needs to look like.

The white boxes underneath show the supporting parts of that. Your assessment criteria, your acceptance criteria for your current state, your planning, your OKRs and SMART objectives for your future state.

Then at the end, you have a reporting state. How do you manage it? How do you report it? What do your dashboards look like? How are they built? How are they integrated? Who has control of them?

Because once you give someone control, you're also giving them authority, and usually responsibility, for that data.

When things go wrong, that responsibility has to sit somewhere.

A RACI matrix is a very good idea. Responsible, accountable, consulted and informed.

If you know who those people are within your organisation, then when things go wrong, you know who is responsible. If no one is responsible, nothing gets done.

No changes are made, no criteria are set, and it just becomes a he-said, she-said exercise. You need that responsibility and accountability in your business. If you're not doing that, don't use data. Leave it alone.

Unless somebody is going to take true cost of ownership for that data, both personally and on behalf of the business, it's a waste of time.

Once you've got your acceptance criteria and documentation together, they should be written to an immutable record.

Here at AIdentity, we use blockchain for that. We actually write all the documents and agreed processes to the block so that everyone can see it, and it's immutable. If anybody changes it, that change gets recorded.

So you have full provenance of your data governance, your systems and all the accountability, responsibility and controls that you need to have.

It's a fairly long-winded way of doing things, but we find it is absolutely the best way.

Because if you just stick it in a SharePoint, which I know a lot of people do, they say, "Yes, we've got a governance process, we've got it written down" and then they just shove it in a SharePoint.

People say they've read it, but nobody ever does anything with it. It just sits gathering digital dust.

That's not what you want. You want this stuff to be effective, not just gathering dust in a filing cabinet.

So let's talk about best data practices. If you're talking about AI, data quality and data management are key. They are really important.

If you don't have the right amount of the right data, with the right practice, management and control, your LLMs and AI will hallucinate to the nth degree.

And the last thing you want is to release something that says 25 people are attending a football match and the fire brigade are coming.

It's not what you want to happen. We know that, you know that, and your customers know that.

So please think about it. When you're building AI, get the data right, because it is essential. If you want your systems to perform well and provide reliable insights, it is essential.

So let's talk about a quick top 10.

First, collect relevant and high-quality data.

Don't collect things that are useless to you. Make sure it's relevant, on point, and aligned to what you're trying to do. Make sure it's the best quality you can get.

Don't just go and buy it from some data dealer down the road. It's going to be unreliable, incomplete, full of errors, and it's going to give you bad results.

The old phrase, "garbage in, garbage out", is exactly what you're going to get with an LLM.

If you put a bad image into an image model, you get a bad image out. Photoshop doesn't magically change the quality of your image, and AI doesn't magically improve the quality of your data.

It uses the same data and produces results that are congruent with that quality.

So your data should reflect a real-world scenario, be clean and error-free, and balanced.

It should represent all classes and categories fairly.

If you're doing something like segmentation, you need a good mix of people in all areas so you don't get irrelevant or too-small data segments that confuse your AI.

Make sure your data is on point but also broad enough to provide targeted insight, rather than just generic or inaccurate datasets.

Second, ensure data diversity and representation.

All models perform best when they're trained on data that covers the widest possible inputs.

Avoid bias. Include samples from different demographics. This reduces the risk of unfair or inaccurate predictions.

If you're doing facial recognition or computer vision, you need different skin tones, ages, lighting conditions, hats, no hats, glasses, beards and no beards. You need the diversity of information so your AI can understand it properly.

You need to think clearly about your criteria and make sure the data reflects the range of things you want the model to recognise.

Third, data privacy and data security.

How important are those in today's world? Very.

Data privacy is massive. Everybody is aware of it. GDPR certainly is. The EU AI Act is very hot on it. Companies are now facing big fines, and that will become more prevalent over the next few months.

Companies in finance also have things like SOC 2, HIPAA and GDPR to comply with. It's all important.

People spend a huge amount of time on this.

So have a data-processing agreement, a GDPR agreement, and make sure you're in touch with your local ICO. Make sure you have documentation from them and that you are actually permitted to process the data you're collecting.

If you're not, that is a major risk. Make sure you are clear with the ICO before you do any of this.

Fourth, label data accurately and consistently.

Everyone thinks, yes, I've put data into my AI and it'll spit things out. Yes, it will, but not necessarily correctly, because the data has no provenance or metadata attached to it.

The AI doesn't understand what that data means. You have to tell it what the data is.

If the name is Colchester, is that a train stop, a place name, a style of boot, a kind of clothing, or a town in Essex?

You need to make sure your data is labelled consistently. If you've got Colchester and Reading, they're both towns. That's what the label should say.

So make sure it is correct.

If you're labelling images, for example, define exact criteria for each object and avoid ambiguity.

Data is non-ambiguous. You need to be accurate and concise. Be really clear about what your data means and what it is you're actually looking at.

If you don't know, find somebody who does. Ask questions. Never be afraid to have too much metadata. It's much better to have too much than not enough.

Fifth, if you're expanding your training set and you don't have all the data, you can use synthetic data or cleverly manipulated images and noise to give your dataset the diversity and structure you need.

This helps reduce overfitting. Think of it like making sure the engine doesn't blow up because you're trying to force too much in.

This is really important in areas like computer vision and speech recognition, where you need to recognise things like facial expressions, intonation, phrasing, timing and pauses for effect.

You need to make sure your systems understand how that works.

Sixth, think about how you correlate your data into proper amounts for training, validation and testing.

Personally, I like 70, 15, 15. A lot of data in the training set, because that is where you get your accuracy from. Not from validation or testing, but from how you actually train your AI or LLM to work properly.

The datasets you use and the questions you ask your AI will define how accurate the responses are.

If you want your AI to give you information that is genuinely useful for business decision-making, you need to make sure it is well trained. If you don't, you're wasting your time.

Don't overlap your training and validation sets. And particularly don't overlap your testing and training sets.

They should be distinct, uniquely correlated and bounded, so you can set objectives around each dataset, such as size, length and string length.

That allows you to say: this is what we did, this is what the result was, and this is how we will improve it.

Seventh, monitor data quality.

It's a bit like water quality. If you don't measure it, something is going to go wrong.

Data quality degrades over time. In a world of real-time streaming data, once it's gone through the pipe and out the other end, it's gone.

So you need automated checks to detect anomalies, missing values or changes in the state distribution – in other words, the flow of data through your pipeline.

If you think of measuring something like fuel flow in a jet engine through digital twinning, and that starts to drop off, you need to know whether that's a real flow problem, a faulty sensor, or an issue further up or down the chain. That's the level of understanding you want.

Eighth, document data sources and processing.

Keep a record of everything you do. If you change the data, that should be recorded somewhere. This is commonly called data lineage or data provenance.

When you go to buy meat from a farm shop, they tell you where it came from, what field it grazed in and what it ate.

The same should be true of your data. Where did it come from? What has happened to it? What is the source? How was it combined? Who is responsible? Where is it now? These are all things you need to know.

Your documentation should include the data origin, the collection method, cleaning and transformation procedures, labelling instructions and quality checks.

Does it actually meet the standards we set? Does it get a green tick? These are all things that matter.

Ninth, data storage and management.

We all think data storage is infinite. It's not. Cloud hosting gives us a lot, but we all know how quickly 100 gigabytes disappears.

I thought 100 gigs was plenty. I'm now at around 10 terabytes when I'm training and testing models, and that's my personal work, not company-scale models.

Data hosting is cheap, yes. Cloud storage can be very cheap. But if you really want to get into it, you need to make sure your storage, backup and historical records are all compressed, stored and structured the right way.

Is it immediate data? Historical data? How quickly do I need it? Is it customer data? How is it used?

If you're mapping a customer journey with multiple touchpoints, all of that information should be available to the customer service rep – whether that's a human or an AI one – so they can see where people are in that journey.

If that's an AI customer rep, it needs all the transcripts and context of everything that has happened so far in order to form an accurate response.

That's hard. And if you're in advertising, it gets even harder when cookies disappear.

Real-time advertising becomes much more difficult unless you're dealing with first-party origin data, data wallets, agentic AI and MCP to actually give you useful information in real time.

And tenth, collaborate across teams.

There is no point in having all the data stuck in separate silos, in separate AIs under someone's desk, using shadow AI rather than something the company actually supports.

Make sure everything is correlated properly. Make sure everyone is using the same data.

I've set up things like federated data warehouses and data marketplaces inside businesses so that the data is available to everybody at the same time, and it is accurate for everybody.

So it doesn't matter whether it's data science, engineering, legal or business – they have the same policies, the same standards, and the same data.

Communication across practices should be regular, organised and structured.

And if you're doing regulatory work, those stakeholders should be informed in the background all the time about any changes you make.

Also show the data flow, show where the teams collaborate, and show where the roles and responsibilities sit.

Again, make sure people understand their responsibilities.

So if we think about a data quality framework, this simple matrix gives you 26 data requirements listed under headings like validity, completeness, uniqueness, timeliness, consistency and accuracy.

I'm sure you've all heard those before in terms of data. People like me bang on about them all the time because they really matter.

There's no point saying that the data type isn't important, or the description doesn't matter, or that you haven't got time to label it. It is important.

What does my data world actually look like? What does this dataverse look like for me? How do I make sure all of these things fit together with dimensions, assessments, indexes, recommendations, quality assurance and regulatory assurance?

These are things you have to have in place. Don't think you can stand back and ignore them. If you don't and something goes wrong, you are liable.

That's not a good place to be. If the ICO comes knocking at your door, that is not where you want to be. You want to be able to say: here is my documentation, here is my process, here is my data, it is all correct.

So if you think about this as a blueprint for success, imagine trying to build a skyscraper without a solid foundation.

Your business data is no different. A data quality framework acts as your blueprint. If you've got that, you know what's going on. If you don't have that, it's all guesswork.

This is not just about fixing errors. It's about ensuring your data is fit for purpose and that the quality is always good.

Quality data fuels smarter decisions, drives efficient operations and underpins success.

For small companies, that's really important. We cannot afford to squander money. Every penny we spend needs to count.

If you don't do that, you're throwing good money after bad. So please think carefully about your data before you start an AI project.

Look at the framework you're using. Validity, completeness, uniqueness, accuracy, consistency and timeliness.

These are great drivers not just for data, but for business decisions, products and internal processes too.

When you think about data quality, remember that it underpins everything else you do.

Your business decisions, product decisions, financial control, release strategy, buy, sell, mergers and acquisitions – everything is shaped by the quality of your data.

Your share price is controlled by the quality of your data. Your value is controlled by the quality of your data.

So let's make sure we get it right.

Ryan: Brilliant. Thank you, John.

A couple of questions, John.

First one: what would you say are the most common data quality issues you see organisations overlook when they're starting an AI project?

John Hauxwell: Accuracy and timeliness are the main two.

Everyone says, "I've got all this historical data because I don't have time to capture everything in real time because my pipeline is full." So they decide to base decisions on historical data.

But we all know that AI only looks backwards and builds on what you've already done. So you want to build on the most current, accurate data.

Make sure the data you've got is complete, accurate and current, and feed it into your AI in real time if you can.

Ryan: Got it. Brilliant. Thank you, John.

And thinking about the audience – probably people on the smaller end of the scale – what would you say smaller teams with limited resources, people and money, can do to apply these best practices?

John Hauxwell: Limited resources, people and money – yes, that's always difficult.

Everyone thinks, "I'm going to take some data, build something with AI, and it's going to generate new revenue for us or new product streams."

Communication is vital. If you're a small team, communicate. Even if it's only five minutes each, if you're the CXO, walk around and say hello. Ask people what they're doing, what's troubling them and what they need.

If you're working in a more agile way, have a 10-minute stand-up every morning where everyone gets together, discusses what's been done, what they're doing and what they need.

Have a requirements board so people can say, "I need this information," instead of inventing it themselves.

That gives everybody the best possible view of the best data you can have, without bringing in a large data audit team or translation team to sort it all out for you.

If you're using something like Copilot, make sure the data you're feeding it has been agreed by everybody. Have an agreement process around it.

Get everyone to sign off on it if you want. Make sure there is a RACI in place so people know who is responsible and accountable, who gets consulted and who gets informed.

That way everybody knows what's going on, and the people who are responsible know they are responsible.

Then you don't get "it's your job" or "no, it's their job." Avoid that.

Because when things go wrong, and they will, you need to know not who to blame, but who is responsible.

Blame is a difficult thing. In small businesses, blame is horrible. Don't do it. If something goes wrong, it's a company-wide failure, not just one person's. You need to be very aware of that when you start a project.

Say, "Look, this is new technology, a new idea, a new part of the business. Let's not play the blame game. We're all in this together." So work together and collaborate.

And yes, if needed, give someone the stakeholder role, or let them act as the project manager or agile lead within the business.

But make sure everything is catalogued, backlogged, on your Kanban board, and visible, so everybody knows what's happening and there are no hidden agendas or shadow agendas within the business.

Ryan: Amazing. That's brilliant. So many good tips there, John.

We've run just a little over, but I think that was really worth it.

Big thank you, John. I think that was really interesting.

Explore our webinar library

Unlock more on-demand sessions designed to help you sharpen your skills, grow your business and stay one step ahead. Find more Lunch and Learn webinars

Get business support right to your inbox

Subscribe to our newsletter to receive business tips, learn about new funding programmes, join upcoming events, take e-learning courses, and more.

Start your business journey today

Take the first step to successfully starting and growing your business.

Join for free